Readability scores are everywhere. Hemingway Editor shows them. Grammarly shows them. Microsoft Word has them buried in the proofing settings. Most of us treat them the way we treated grades in school: a high score is good, a low score is bad, and we never think too hard about what's being measured.

The Flesch-Kincaid formula counts exactly two things: words per sentence and syllables per word. That's the entire model. It knows nothing about clarity, coherence, logical flow, or whether a piece of writing actually makes sense. Once we understand what this formula does (and what it ignores), we can stop chasing a number and start using it as one signal among many.

How Flesch-Kincaid Actually Works

The Flesch-Kincaid Grade Level formula looks like this:

In plain language: it multiplies the average sentence length by one weight and the average syllable count per word by another, then subtracts a constant. Longer sentences raise the grade. More syllables raise the grade. The output is supposed to correspond to a U.S. school grade level, so a score of 8.0 means (roughly) that an eighth grader should be able to read it.

That's useful information. But it's worth seeing where the formula breaks down.

Consider two sentences:

- "The mitochondria is the powerhouse of the cell." (FK grade: approximately 8)

- "The thing does the stuff for the other thing." (FK grade: approximately 3)

The first sentence communicates a specific fact. The second says nothing. Yet the formula prefers the second one because the words are shorter and simpler.

Here's another blind spot: technical vocabulary. A sentence like "The epidemiologist analyzed seroprevalence data" scores high because those words have many syllables. But for readers in public health, those are the precise, correct terms. Swapping in shorter words would make the writing less clear, not more.

And one more: short, choppy sentences can game the score downward while actually making prose harder to read. "She ran. It rained. The dog barked. They left." scores very low on FK, but reading it feels like being poked in the shoulder repeatedly. Sentence variety matters. FK can't see it.

The Flesch Reading Ease Scale

The related Flesch Reading Ease score works on a 0 to 100 scale, where higher means easier to read:

| Score | Grade Level | Difficulty |

|---|---|---|

| 90-100 | 5th grade | Very easy |

| 80-89 | 6th grade | Easy |

| 70-79 | 7th grade | Fairly easy |

| 60-69 | 8th-9th grade | Standard |

| 50-59 | 10th-12th grade | Fairly difficult |

| 30-49 | College | Difficult |

| 0-29 | Graduate | Very difficult |

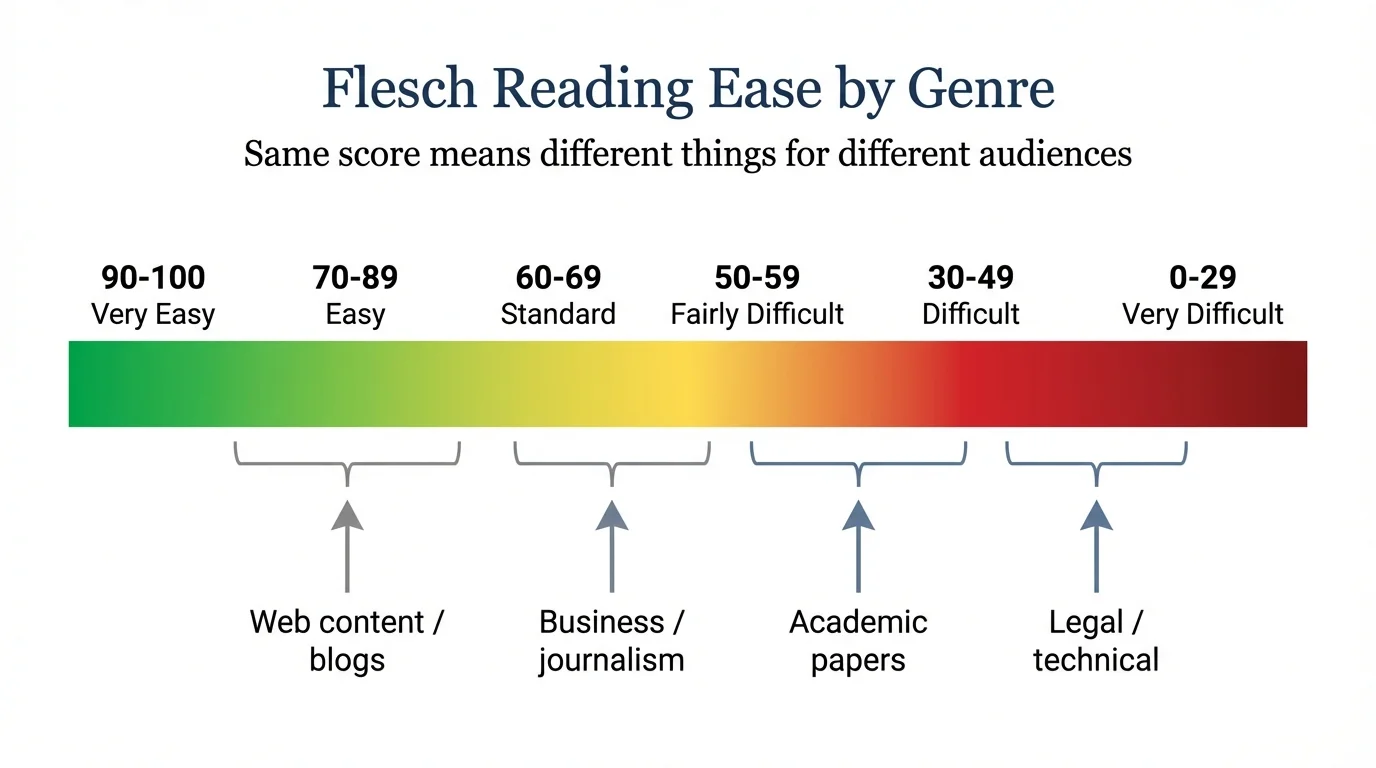

These ranges are useful as rough orientation, but context changes everything. A medical journal article scoring FK 14 (graduate level) is perfectly appropriate for its audience. A patient handout scoring FK 14 is a failure, because the reader needs to act on the information under stress, possibly in a second language. The same score means completely different things depending on who is reading and why.

What Flesch-Kincaid Misses

The dimensions that actually make writing readable are invisible to any formula that counts syllables and sentence length.

Coherence

Do sentences connect to each other? Does each paragraph follow logically from the one before it? A piece of writing can score FK 6 and still be incoherent, jumping from topic to topic without transitions or logical thread. Coherence is arguably the most important quality of clear prose, and no readability formula can measure it.

Lexical Density

How much information does each sentence carry? FK doesn't distinguish between a sentence padded with empty phrases ("It is important to note that in many cases, the data tends to suggest...") and one that delivers the same idea efficiently ("The data suggest..."). Both might score similarly if they share sentence length and syllable count, but one communicates twice as effectively.

Sentence Variety

FK averages sentence length across an entire piece. A document where every sentence is exactly 15 words and a document where sentences range from 5 to 30 words could produce the same average. But the reading experience is completely different. Varied sentence length creates rhythm. Uniform length creates monotony. This is one of the oldest principles of prose style, and readability formulas are blind to it.

Vocabulary Appropriateness

"Utilize" and "use" mean the same thing. "Utilize" has more syllables, so it raises the FK score. But FK can't tell us which word fits our audience. For general readers, "use" is almost always better. For a technical specification where "utilize" has a specific meaning distinct from "use," it might be the right call. The formula just sees syllable counts.

Document Structure

Section organization, paragraph length, heading hierarchy, logical progression from introduction to conclusion. None of this is measured. A well-structured document with clear headings and short paragraphs is easier to read than an unbroken wall of text, even if the FK score is identical.

The Writing Style Analyzer measures multiple dimensions for this reason: readability alone tells us almost nothing about whether writing works.

What to Actually Target

So if FK is limited, what numbers should we pay attention to? Here are some guidelines, keeping in mind that every one of these is context-dependent.

By Writing Context

General web content: FK 7-9. Not because web readers lack education, but because web reading is fast, distracted, and competitive. We're scanning, not studying. Lower grade levels reduce friction.

Business communication: FK 8-11. Internal memos and reports can tolerate slightly more complexity, but the same principle holds: busy professionals skim.

Academic papers: FK 12-16 is typical, and that's fine. But lower is better when the content allows it. The best academic writers use complex ideas with relatively simple sentences.

A More Useful Metric: Polysyllabic Word Rate

The percentage of words with three or more syllables is often more diagnostic than FK grade level. It isolates vocabulary complexity from sentence structure, which makes it easier to act on.

- Under 15%: Accessible to general audiences

- 15-25%: Comfortable for educated readers

- Over 25%: Likely needs simplification for anyone outside a specialist audience

If our polysyllabic rate is 30%, we know to look for unnecessarily complex word choices. If it's 10% but our FK is still high, we know the issue is sentence length, not vocabulary. That kind of targeted diagnosis is more useful than a single composite score.

Sentence Length Variation

Rather than obsessing over average words per sentence, it helps to look at the range. If most sentences cluster between 12 and 18 words, the writing probably feels monotonous. A healthy mix runs from 5 or 6 words up to 25 or 30, with the average landing somewhere around 15-20 for most contexts. The warm-up exercises on this site can help build that kind of natural rhythm.

Putting It Together

A readability score is a thermometer reading. It tells us something real (sentence and word complexity), but a thermometer can't diagnose illness. FK can't diagnose unclear writing either.

The better approach is to treat readability as one instrument in a dashboard. The Writing Style Analyzer shows FK alongside sentence variety, lexical density, and vocabulary sophistication precisely because no single metric captures whether writing is actually clear.

When reviewing a draft, we might check FK to flag obvious problems (a blog post scoring FK 15 probably has sentences that need splitting), then look at sentence length variation to catch monotony, then check polysyllabic word rate to spot unnecessary jargon. Each metric catches something the others miss.

For a deeper look at how these metrics play out in academic writing specifically, the guide to clear academic prose walks through practical techniques for lowering complexity without losing precision.

The goal is to write clearly for our specific reader, not to score well on a formula. Readability scores can help with that, but only if we understand what they're actually measuring.